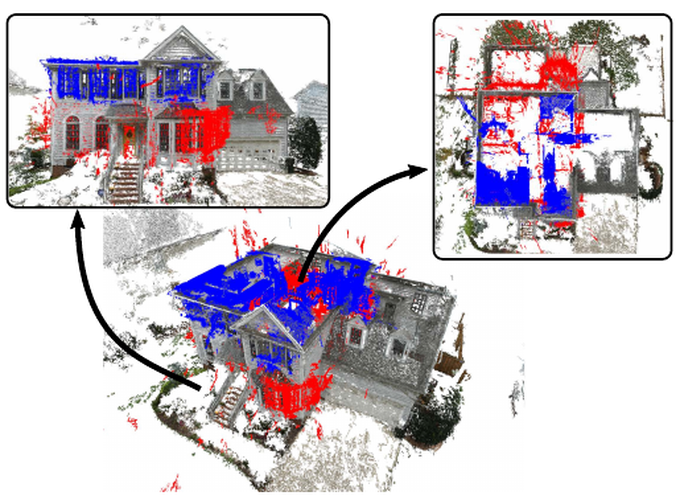

Structure-from-Motion can achieve accurate reconstructions of urban scenes. However, reconstructing the inside and the outside of a building into a single model is very challenging due to the lack of visual overlap and the change of lighting conditions between the two scenes. We propose a solution to align disconnected indoor and outdoor models of the same building into a single 3D model. Our approach leverages semantic information, specifically window detections, in multiple scenes to obtain candidate matches from which an alignment hypothesis can be computed. To determine the best alignment, we propose a novel cost function that takes both the number of window matches and the intersection of the aligned models into account. We evaluate our solution on multiple challenging datasets.

ECCV 2016